The following was taken from a presentation by Sally Cowling, Director of Research, Innovation and Advocacy for UnitingCare Children, Young People and Families. The presentation was to the Social Impact Measurement Network of Australia (SIMNA) New South Wales chapter on March 11 2015. Sally was discussing the measurement aspects of the Newpin Social Benefit Bond, which is referred to as a social impact bond in this article for an international audience.

The social impact bond (called Social Benefit Bond in New South Wale s) was something very new for us. The Newpin (New Parent and Infant Network) program had been running for a decade supported by our own investment funding, and our staff were deeply committed to it. When our late CEO, Jane Woodruff, appointed me to our SIB team she said my role was to ’make sure this fancy financial thing doesn’t bugger Newpin up’.

s) was something very new for us. The Newpin (New Parent and Infant Network) program had been running for a decade supported by our own investment funding, and our staff were deeply committed to it. When our late CEO, Jane Woodruff, appointed me to our SIB team she said my role was to ’make sure this fancy financial thing doesn’t bugger Newpin up’.

One of the important steps in developing a social impact bond is to develop a counterfactual. This estimates what would have happened to the families and children involved in Newpin without the program, the ‘business as usual’ scenario. This was the hardest part of the SIB. The Newpin program works with families to become strong enough for their children to be restored to them from care. But the administrative data didn’t enable us to compare groups of potential Newpin families based on risk profiles to determine a probability of restoration to their families for children in care. We needed to do this to estimate the difference the program could make for families, and to assess the extent to which Newpin would realise government savings.

Experimenting with randomised control trials

NSW Family and Community Services (FACS) were keen to randomly allocate families to Newpin as an efficient means to compare family restoration and preservation outcomes for those who were in our program and those who weren’t. A randomised control trial is generally considered the ‘gold standard’ in the measurement of effect, so that’s where we started.

One of my key lessons from my Newpin practice colleagues was the importance of their relationships and conversations with government child protection (FACS) staff when determining which families were ready for Newpin and had a genuine probability (much lower than 100%) of restoration. When random allocations were first flagged I thought ‘this will bugger stuff up’.

One of my key lessons from my Newpin practice colleagues was the importance of their relationships and conversations with government child protection (FACS) staff when determining which families were ready for Newpin and had a genuine probability (much lower than 100%) of restoration. When random allocations were first flagged I thought ‘this will bugger stuff up’.

To the credit of FACS they were willing to run an experiment involving local Newpin Coordinators and their colleagues in child protection services. We created some basic Newpin eligibility criteria and FACS ran a list from their administrative data and randomly selected 40 families (all of whom were de-identified) for both sets of practitioners to consider. A key part of the experiment was for the FACS officer with access to the richer data in case files to add notes. Through these notes and conversations it was quickly clear that a lot of mothers and fathers on the list weren’t ready for Newpin because:

- One was living in south America

- A couple had moved interstate

- One was in prison

- One had subsequent children who had been placed into care

- One was co-resident with a violent and abusive partner – a circumstance that needed to be addressed before they could commence Newpin

From memory, somewhere between 15 and 20 percent of our automated would-be-referrals would have been a good fit for the program. It was enlightening to be one of the non-practitioners in the room listening to specialists exchange informed, thoughtful views about who Newpin could have a serious chance at working for. This experiment was a ‘light bulb moment’ for all of us. For both the government and our SIB teams, randomisation was off the table. Not only was the data not fit for that purpose, we all recognised the importance of maintaining professional relationships.

In hindsight, I think the ‘experiment’ was also important to building the trust of our Newpin staff in our negotiating team. They saw an economist and accountant listening to their views and engaging in a process of testing. They saw that we weren’t prepared to trade off the fidelity and integrity of the Newpi n program to ‘get’ a SIB and that we were thinking ethically through all aspects of the program. We were a team and all members knew where they did and didn’t have expertise.

n program to ‘get’ a SIB and that we were thinking ethically through all aspects of the program. We were a team and all members knew where they did and didn’t have expertise.

Ultimately Newpin is about relationships. Not just the relationships between our staff and the families they work with, but the relationship between our staff and government child protection workers.

But we still had the ‘counterfactual problem’! The joint development phase of the SIB – in which we had access to unpublished and de-identified government data under strict confidentiality provisions – convinced me that we didn’t have the administrative data needed to come up with what I had come to call the ‘frigging counterfactual’ (in my head the adjective was a bit sharper!). FACS suggested I come up with a way to ‘solve’ the problem and they would do their best to get me good proxy data. As the deadline was closing in, I remember a teary, pathetic midnight moment willing that US-style admin data had found a home in Australia.

Using historical data from case files

Eventually you have to stop moping and start working. I decided to go back to the last three years of case files for the Newpin program. Foster care research is clear that the best predictor of whether a child in the care system would be restored to their family was duration in care. We profiled all the children we had worked with, their duration in care prior to entry to Newpin and intervention length. FACS provided restoration and reversal rates in a matrix structure and matching allowed us to estimate that if we worked with the same group of families (that is, the same duration of care profiles) under the SIB that we had in the previous 3 years, then the counterfactual (the percentage of children who would be restored without a Newpin intervention) would be 25%.

As we negotiated the Newpin Social Benefit Bond contract with the NSW Government we did need to acknowledge that a SIB issue had never been put to the Australian investment market and we needed to provide some protection for investors. We negotiated a fixed counterfactual of 25% for the first three years of the SIB. That means that the Newpin social impact bond is valued and paid on the restoration rate we can achieve over 25%. Thus far, our guesses have been remarkably accurate. To the government’s immense credit, they are building a live control group that will act as the counterfactual after the first three years. This is very resource intensive but the government was determined to make the pilot process as robust as possible

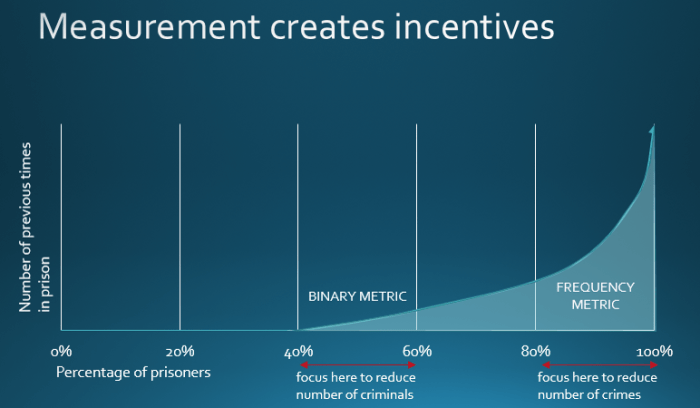

In terms of practice culture, I can’t emphasise enough the importance of thinking ethically. We had to keep asking ourselves, ‘Does this financial structure create perverse incentives for our practice?’ The matched control group and tightly defined eligibility criteria remove incentives for ‘cherry picking’ (choosing easier cases). The restoration decisions that are central to the effectiveness of the program are made independently by the NSW Children’s Court and we need to be confident that children can remain safely at home. If a restoration breaks down within 12 months our performance payment for that result is returned to the government. For all of us involved in the Newpin Social Benefit Bond project behaving thoughtfully, ethically and protecting the integrity of the Newpin program has been our raison d’etre. That under the bond, the program is achieving better results for a much higher risk of group of families and spawning practice innovation is a source of joy which is true to our social justice ethos.