There’s been a lot of discussion over t he past few weeks as to whether Rikers Island was a success or failure and what that means for the SIB ‘market’. You can read the Huffington Post learning and analyses from investors and the Urban Institute as to the benefits and challenges of this SIB. But I think the success and failure discussion fails to recognise the differences in objectives and approaches between SIBs. So I’d like to elaborate on one of these differences, and that’s the attitude towards continuous adaptation of the service delivery model. Some SIBs are established to test whether a well-defined program will work with a particular population. Some SIBs are established to develop a service delivery model – to meet the needs of a particular population as they are discovered.

he past few weeks as to whether Rikers Island was a success or failure and what that means for the SIB ‘market’. You can read the Huffington Post learning and analyses from investors and the Urban Institute as to the benefits and challenges of this SIB. But I think the success and failure discussion fails to recognise the differences in objectives and approaches between SIBs. So I’d like to elaborate on one of these differences, and that’s the attitude towards continuous adaptation of the service delivery model. Some SIBs are established to test whether a well-defined program will work with a particular population. Some SIBs are established to develop a service delivery model – to meet the needs of a particular population as they are discovered.

1. Testing an evidence-based service-delivery model

This is where a service delivery model is rigorously tested to establish whether it delivers outcomes to this particular population under these particular conditions, funded in this particular way. These models are often referred to as ‘evidence-based programs’ that have been rigorously evaluated. The US is further ahead than other countries in the evaluation of social programs, so while these ‘proven’ programs are still in the minority, there are more of them in the US than elsewhere. These SIBs are part of a movement to support and scale programs that have proven effective. They are also part of a drive to more rigorously evaluate social programs, which has resulted in some evaluators attempting to keep all variables constant throughout service delivery.

An evidence-based service delivery model might:

- be used to test whether a service delivery model that worked with one population will work with another;

- be implemented faithfully and adhered to;

- change very little over time, in fact effort may be made to keep all variables constant e.g. prescribing the service delivery model in the contract;

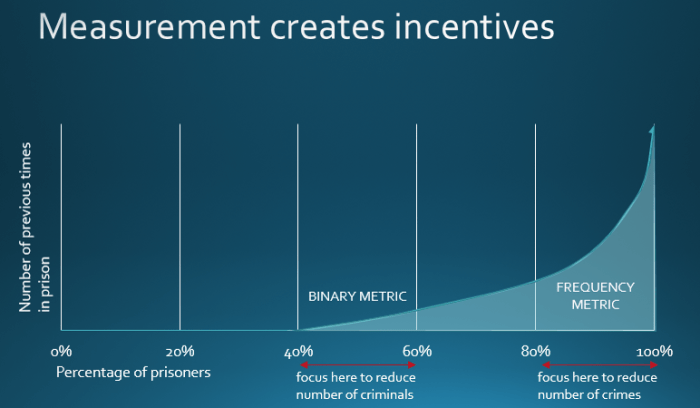

- have a measurement focus that answers the question ‘was this service model effective with this population’?

“SIBs are a tool to scale proven social interventions. SIBs could fill a critical void: other than market-based approaches, a structured and replicable model for scaling proven solutions has not existed previously. SIBs can give structure to the critical handoff between philanthropy (the risk capital of social innovation) and government (the scale-up capital of social innovation) to bring evidence-based interventions to more people.” (McKinsey (2012) From potential to action: Bringing social impact bonds to the US, p.7).

2. Developing a service delivery model

This is where you do whatever it takes to deliver outcomes, so that the service is constantly evolving. It may include an evidence-based prescriptive service model or a combination of several well evidenced components, but is expected to be continuously tested and adjusted. It may be coupled with a flexible budget (e.g. Peterborough and Essex) to pay for variations and additions services that were not initially foreseen. This approach is more prevalent in the UK.

A continuously adjusted service delivery model might:

- be used to deliver services to populations that have previously not received services, to see whether outcomes could be improved;

- involve every element of service delivery being continuously analysed and refined in order to achieve better outcomes;

- continuously evolve – the program keeps adapting to need as needs are uncovered;

- have a measurement focus that answers the question ‘were outcomes changed for this population’?

Andrew Levitt of Bridges Ventures, the biggest investor in SIBs in the UK, “There is no such thing as a proven intervention. Every intervention can be better and can fail if it’s not implemented properly –it’s so harmful to start with the assumption that it can’t get better.” (Tomkinson (2015) Delivering the Promise of Social Outcomes: The Role of the Performance Analyst p.18)

Different horses for different courses

The New York City SIB was designed to test whether the Adolescent Behavioral Learning Experience (ABLE) program would reduce the reoffending of the young offenders exiting Rikers Island. Fidelity to the designated service delivery model was prioritised, in order to obtain robust evidence of whether this particular program was effective. WYNC News reported that “Susan Gottesfeld of the Osborne Association, the group that worked with the teens, said teens needed more services – like mental health care, drug treatment and housing assistance – once they left the jail and were living back in their neighbourhoods.”

In a July 28 New York Times article by Eduardo Porter, Elizabeth Gaynes, Chief Executive of the Osborne Association is quoted as saying “All they were testing is whether M.R.T. by itself would make a difference, not whether something you could do in a jail would make a difference,” Ms. Gaynes said. “Even if we could have raised money to do other stuff, we were not allowed to because we were testing M.R.T. alone.”

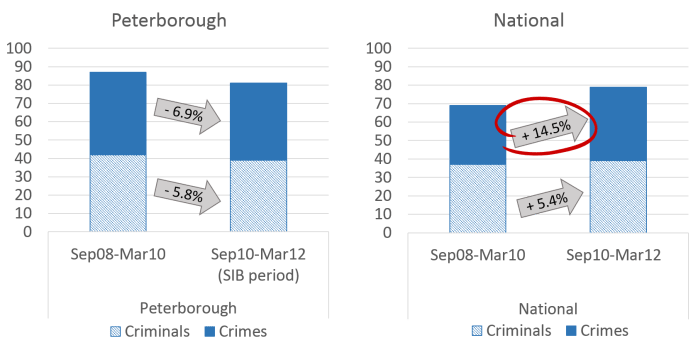

This is in stark contrast with the approach taken in the Peterborough SIB. Their performance management approach was a continuous process of identifying these additional needs and procuring services to meet them. The Peterborough SIB involved many adjustments to its service over the course of delivery. For example, mental health support was added, providers changed, a decision was made to meet all prisoners at the gate… as needs were identified, the model was adjusted to respond. (For more detail, see Learning as They Go p.22, Nicholls, A., and Tomkinson, E. (2013). Case Study: The Peterborough Pilot Social Impact Bond. Oxford: Saïd Business School, University of Oxford.)

Neither approach is necessarily right or wrong, but we should avoid painting one SIB a success or failure according to the objectives and approach of another. What I’d like to see is a question for each SIB: ‘What is it you’re trying to learn/test?’ It won’t be the same for every SIB, but making this clear from the start allows for analysis at the end that reflects that learning and moves us forward. As each program finishes, let’s not waste time on ‘Success or failure?’, let’s get stuck into: ‘So what? Now what?’

Huge thanks to Alisa Helbitz and Steve Goldberg for their brilliant and constructive feedback on this blog.