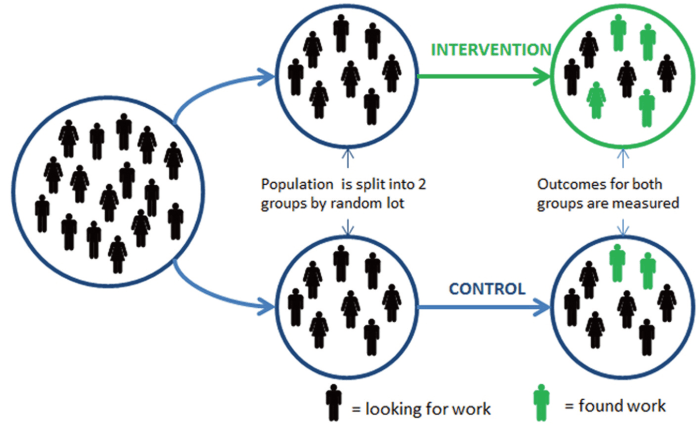

The basic design of a randomised controlled trial (RCT), illustrated with a test of a new ʻback to workʼ programme (Haynes et. al, 2012, p.4).

In 2012, Laura Haynes, Owain Service, Ben Goldacre & David Torgerson wrote the fantastic paper Test, Learn, Adapt: Developing Public Policy with Randomised Controlled Trials. They begin the paper by making the case for RCTs with the following four points.

1.We don’t necessarily know ‘what works’ – “confident predictions about policy made by experts often turn out to be incorrect. RCTs have demonstrated that interventions which were designed to be effective were in fact not”

2. RCTs don’t have to cost a lot of money – “The costs of an RCT depend on how it is designed: with planning, they can be cheaper than other forms of evaluation.”

3. There are ethical advantages to using RCTs – “Sometimes people object to RCTs in public policy on the grounds that it is unethical to withhold a new intervention from people who could benefit from it.” “If anything, a phased introduction in the context of an RCT is more ethical, because it generates new high quality information that may help to demonstrate that an intervention is cost effective.”

4. RCTs do not have to be complicated or difficult to run – “It is much more efficient to put a smaller amount of effort [than a post-intervention impact evaluation] into the design of an RCT before a policy is implemented.”

Laura and her team are making a huge difference to the way the UK Government perceives and implements RCTs.

The World Bank has also published some fantastic guidance in their Impact Evaluation Overview. This includes information abou their Development Impact Evaluation (DIME) initiative that has the following objectives:

-

“To increase the number of Bank projects with impact evaluation components;

-

To increase staff capacity to design and carry out such evaluations;

-

To build a process of systematic learning based on effective development interventions with lessons learned from completed evaluations.”

I’ve popped both these resources on the Social Impact Bond Knowledge Box page Comparisons and the counterfactual, but thought they were so valuable it was worth expanding on them here.