On a recent trip to the US, I noticed that the discussions around ‘Pay for Success’ were a little different to those I’d been having on ‘Social Impact Bonds (SIBs)’ with other countries. Particularly in the measurement community, there was an idea that Pay for Success took measurement of social programs to a new level: that ‘Pay for Success’ meant paying for an effect size (by comparison to a control group), rather than ‘Pay for Performance’ which paid for the number of times something occurred. Continue reading

UK

Public trust and confidence in charities only 6.6%?

The UK Charity Commission is one of the models feeding into the nearly-established Australian Charities and Not-for-profits Commission, so we learn from the triumphs and failures that have come before us. Here’s a lesson: always check your units!

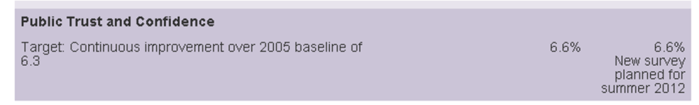

The Charity Commission Annual Report includes “Our performance this year“, which reports against a range of inputs and outputs. And I congratulate them for leading by example and doing this. It’s very clear and accessible. There is one outcome for 2011-12: And the results are (final two column headers are 2010-11, 2011-12):

And the results are (final two column headers are 2010-11, 2011-12): Does that look a little low to you? They’re obviously improving, but 6.6% ain’t a whole lot of trust! Let’s check out the source…

Does that look a little low to you? They’re obviously improving, but 6.6% ain’t a whole lot of trust! Let’s check out the source…

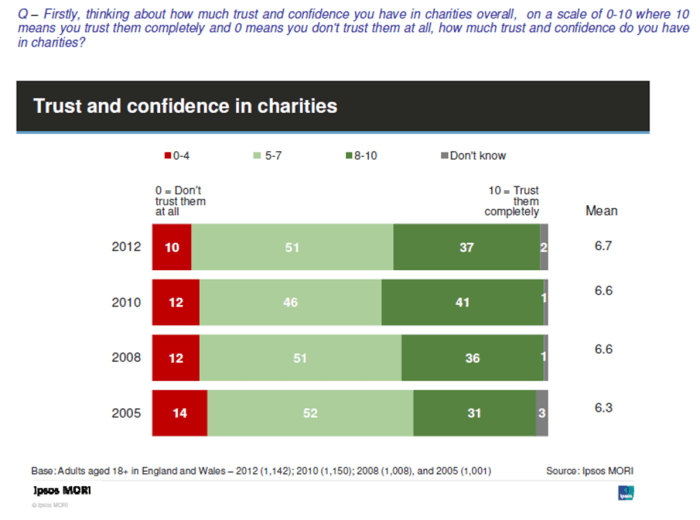

Here is the extract from Ipsos MORI’s 2012 report for the Charity Commission – note the question at the top. (The Annual Report above looks like it used the 2010 data.) Ok, that’s enough of the point and laugh. I would absolutely recommend reading the full Ipsos MORI report – there are further questions on what respondents trusted charities to do, why they trusted them more or less and how much this was affected by their own engagement with the charity sector.

Ok, that’s enough of the point and laugh. I would absolutely recommend reading the full Ipsos MORI report – there are further questions on what respondents trusted charities to do, why they trusted them more or less and how much this was affected by their own engagement with the charity sector.

I’m so glad they gave charities 6.7 out of 10, not 100 – that’s a little more optimistic!